Generate a GitHub Actions workflow file from dotnet CLI

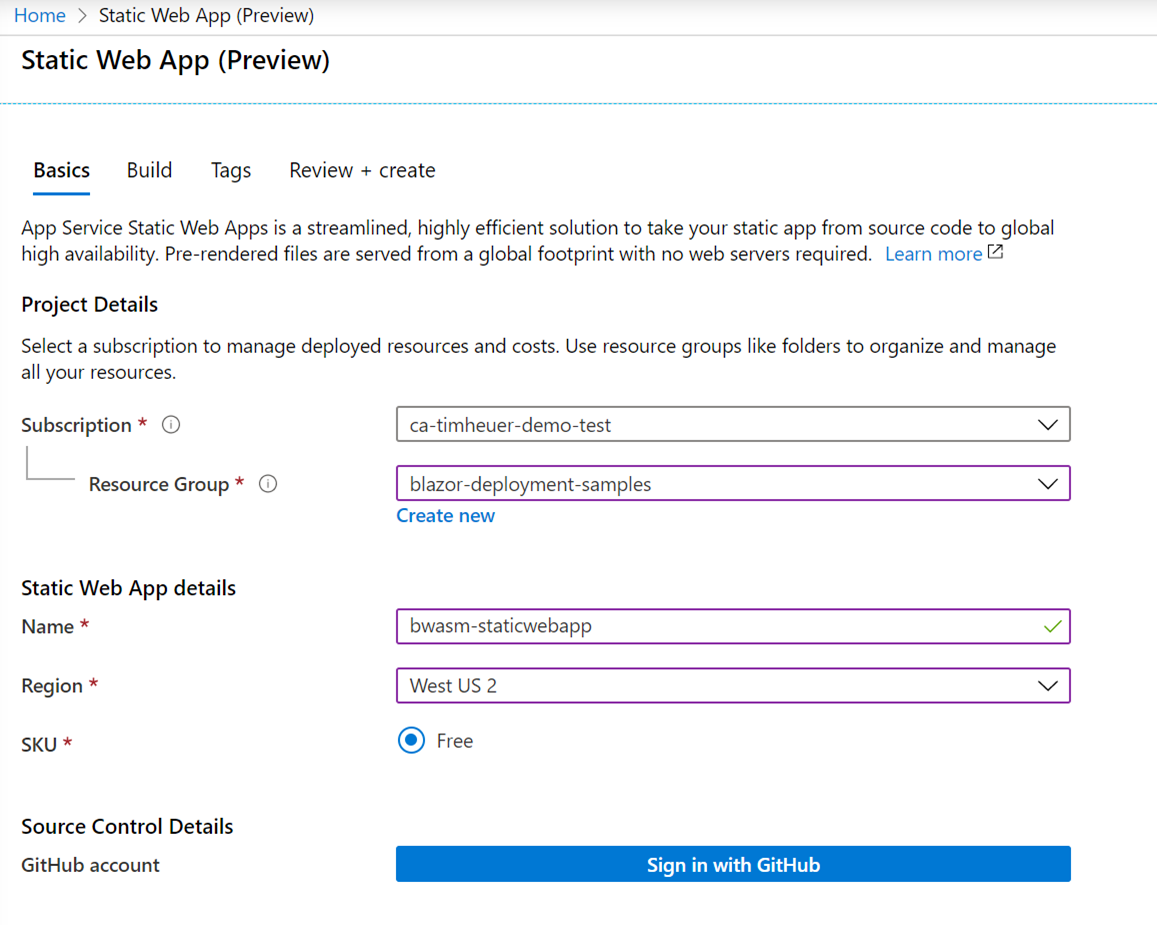

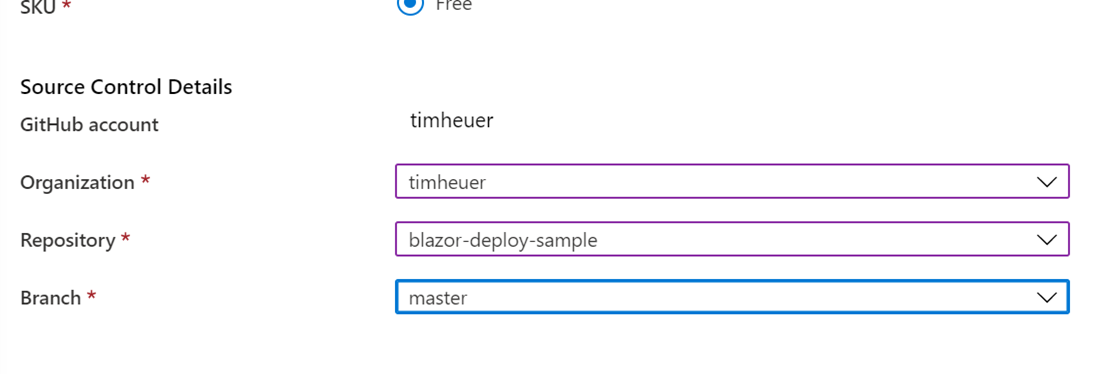

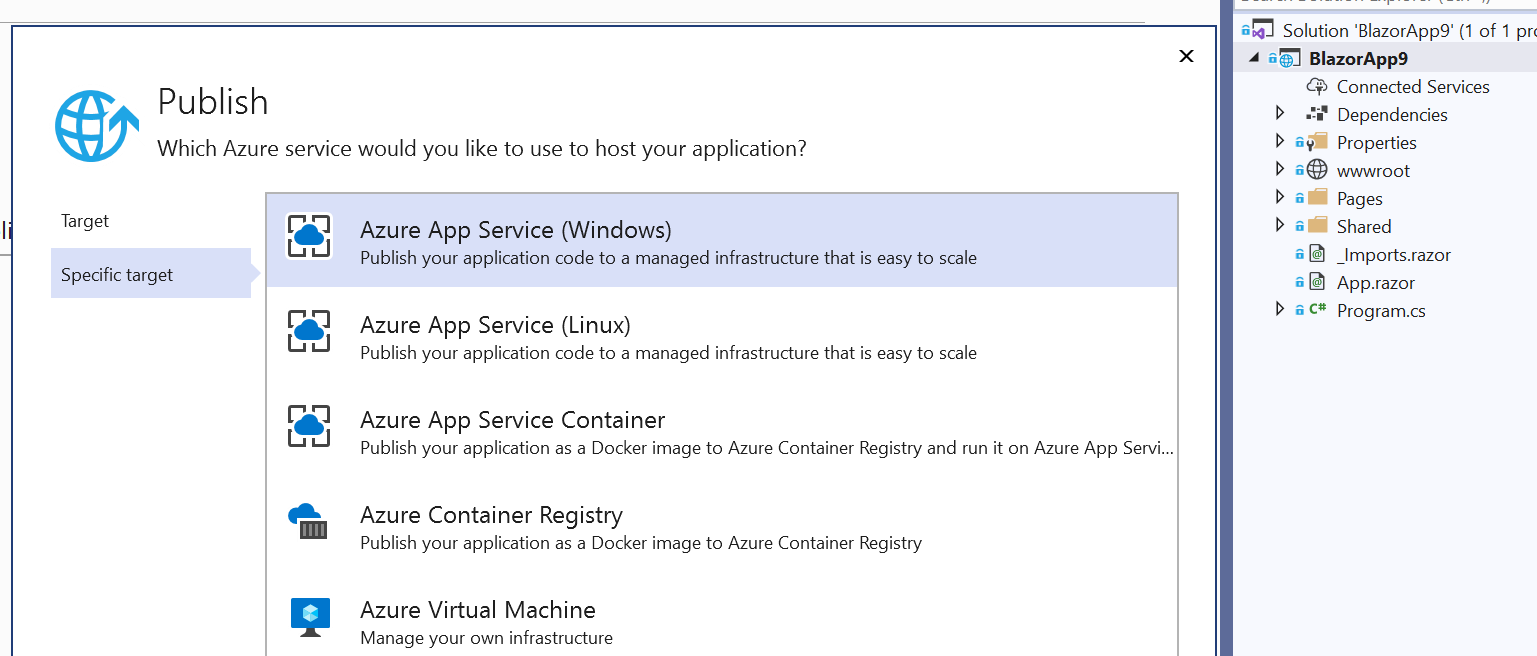

I’ve become a huge fan of DevOps and spending more time ensuring my own projects have a good CI/CD automation using GitHub Actions. The team I work on in Visual Studio for .NET develops the “right click publish” feature that has become a tag line for DevOps folks (okay, maybe not in the post flattering way!). We know that a LOT of developers use the Publish workflow in Visual Studio for their .NET applications for various reasons. In reaching out to a sampling and discussing CI/CD we heard a lot of folks talking about they didn’t have the time to figure it out, it was too confusing, there was no simple way to get started, etc. In this past release we aimed to improve that experience for those users of Publish to help them very quickly get started with CI/CD for their apps deploying to Azure. Our new feature enables you to generate a GitHub Actions workflow file using the Publish wizard to walk you through it. In the end you have a good getting started workflow. I did a quick video on it to demonstrate how easy it is:

One of my favorite features we've been working on in @VisualStudio. Yes, Right Click Publish!!!! #devops #dotnet pic.twitter.com/Jy2jSWplam

— Tim Heuer (@timheuer) November 3, 2020

It really is that simple!

Making it simple from the start

I have to admit though, as much as I have been doing this the YAML still is not sticking in my memory enough to type from scratch (dang you IntelliSense for making me lazy!). There are also times where I’m not using Azure as my deployment but still want CI/CD to something like NuGet for my packages. I still want that flexibility to get started quickly and ensure as my project grows I’m not waiting to the last minute to add more to my workflow. I just saw Damian comment on this recently as well:

100% this.

— Damian Brady #BLM (@damovisa) November 2, 2020

Create a pipeline to deploy Hello World, then build on that. You'll only have to tweak the pipeline as you go instead of trying to figure out how to deploy this big complex thing at the end. https://t.co/DV8J1IXtQW

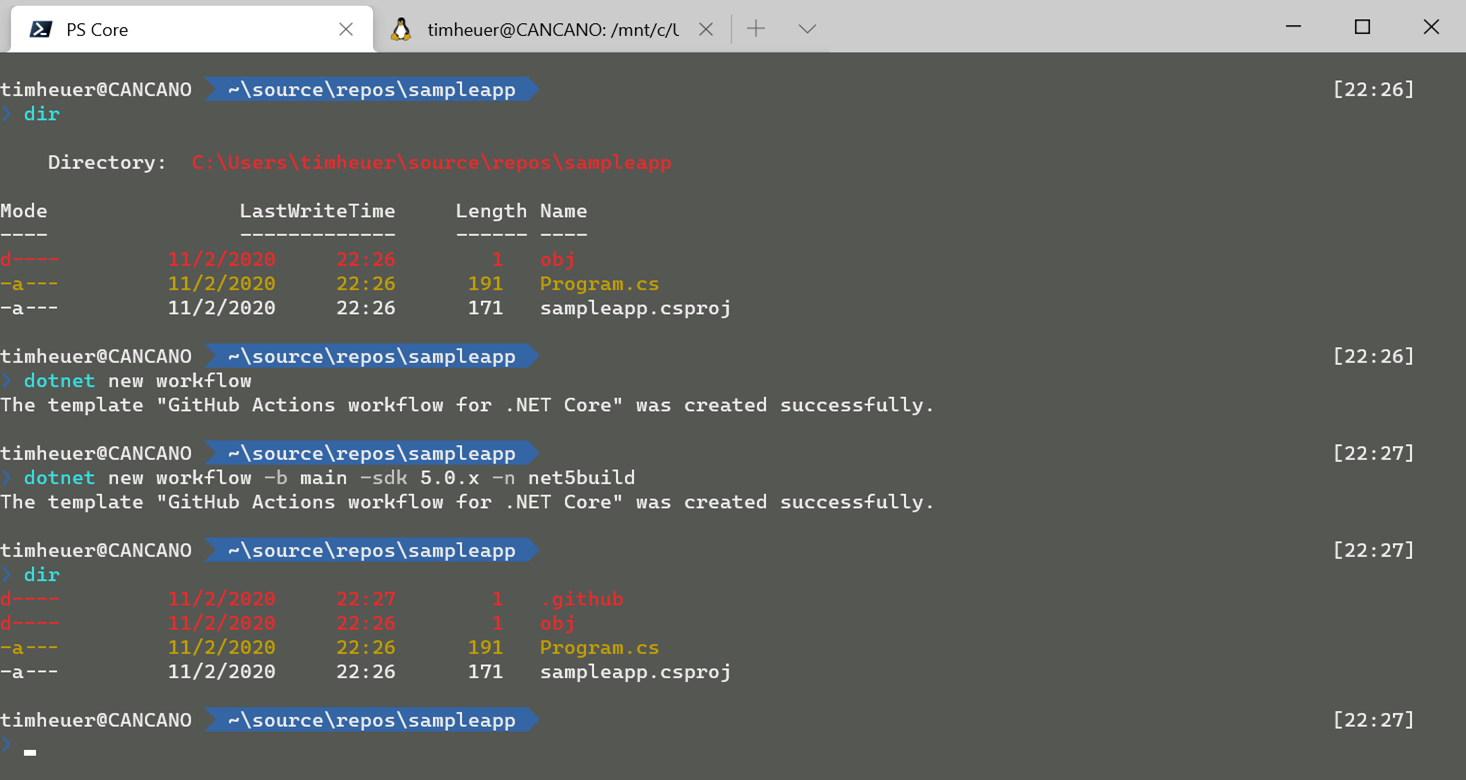

I totally agree! Recently I found myself continuing to go to older repos to copy/paste from existing workflows I had. Sure, I can do that because I’m good at copying/pasting, but it was just frustrating to switch context for even that little bit. My searching may suck but I also didn’t see a quick solution to this either (please point out in my comments below if I missed a better solution!!!). So I created a quick `dotnet new` way of doing this for my projects from the CLI.

I created a simple item template that can be called using the `dotnet new` command from the CLI. Calling this in simplest form:

dotnet new workflow

will create a .github\workflows\foo.yaml file where you call it from (where ‘foo’ is the name of your folder) with the default content of a workflow for .NET Core that restores/builds/tests your project (using a default SDK version and ‘main’ as the branch). You can customize the output a bit more with a command like:

dotnet new workflow --sdk-version 3.1.403 -n build -b your_branch_name

This will enable you to specify a specific SDK version, a specific name for the .yaml file, and the branch to monitor to trigger the workflow. An example of the output is here:

name: "Build"

on:

push:

branches:

- main

paths-ignore:

- '**/*.md'

- '**/*.gitignore'

- '**/*.gitattributes'

workflow_dispatch:

branches:

- main

paths-ignore:

- '**/*.md'

- '**/*.gitignore'

- '**/*.gitattributes'

jobs:

build:

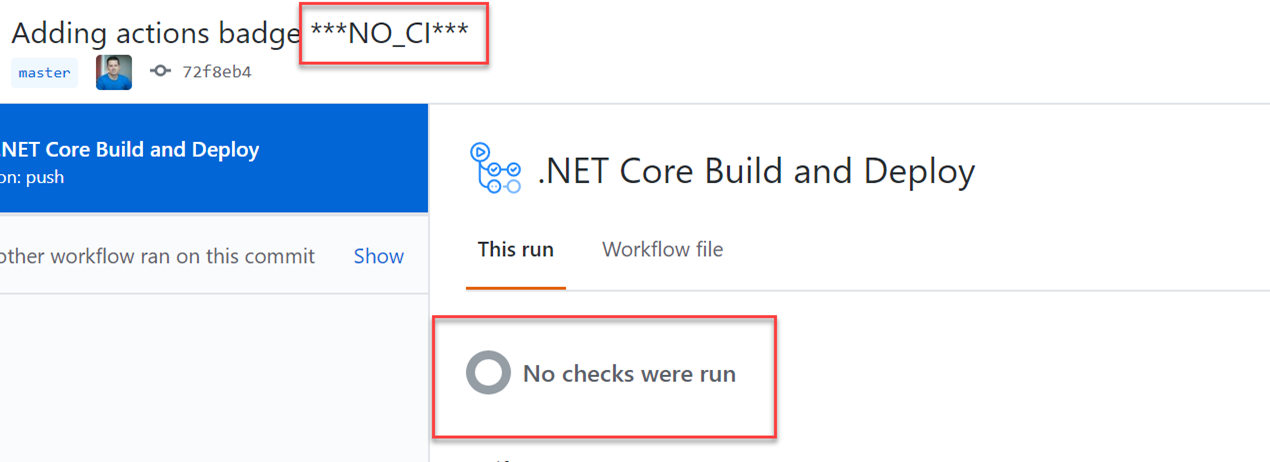

if: github.event_name == 'push' && contains(toJson(github.event.commits), '***NO_CI***') == false && contains(toJson(github.event.commits), '[ci skip]') == false && contains(toJson(github.event.commits), '[skip ci]') == false

name: Build

runs-on: ubuntu-latest

env:

DOTNET_CLI_TELEMETRY_OPTOUT: 1

DOTNET_SKIP_FIRST_TIME_EXPERIENCE: 1

DOTNET_NOLOGO: true

DOTNET_GENERATE_ASPNET_CERTIFICATE: false

DOTNET_ADD_GLOBAL_TOOLS_TO_PATH: false

DOTNET_MULTILEVEL_LOOKUP: 0

steps:

- uses: actions/checkout@v2

- name: Setup .NET Core SDK

uses: actions/setup-dotnet@v1

with:

dotnet-version: 3.1.x

- name: Restore

run: dotnet restore

- name: Build

run: dotnet build --configuration Release --no-restore

- name: Test

run: dotnet test

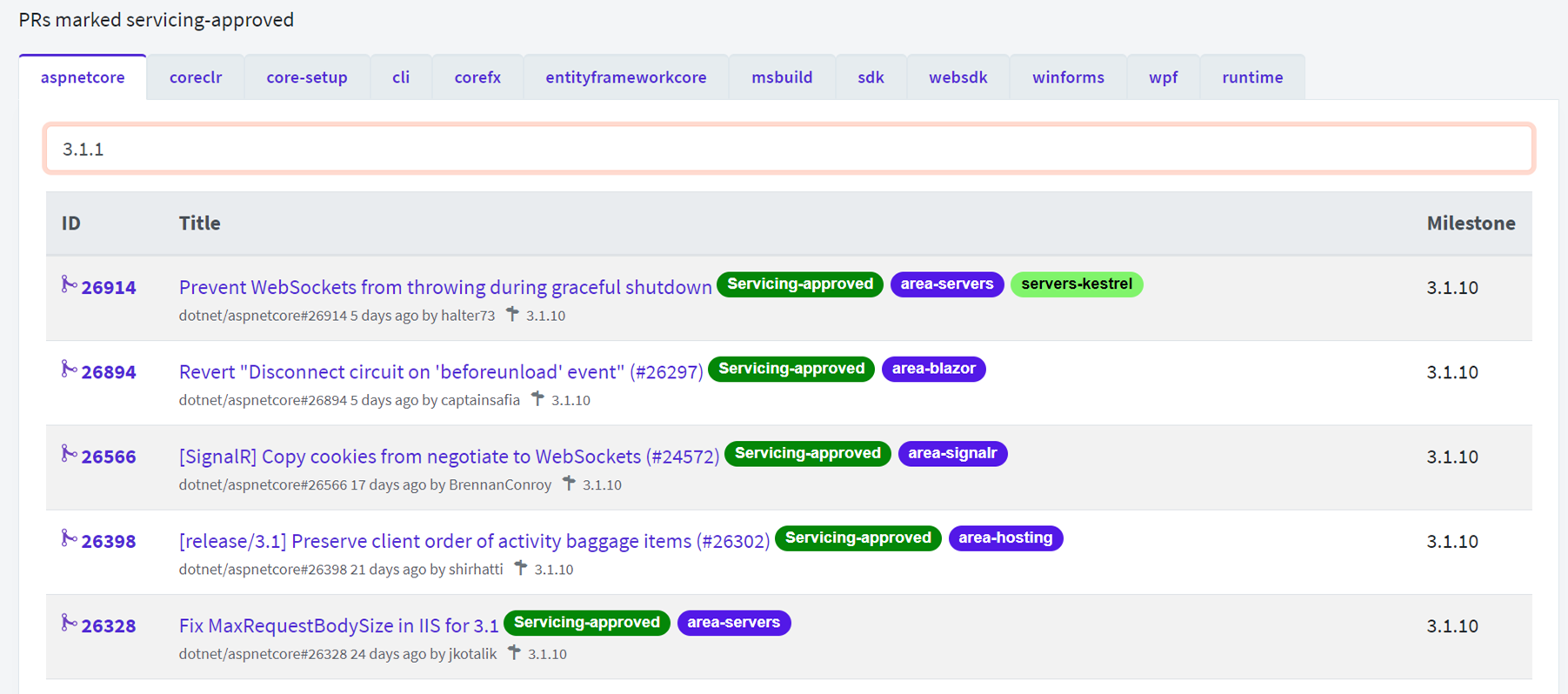

You can see the areas that would be replaced by some input parameters on lines 6,13,38 here in this example (these are the defaults). This isn’t meant to be your final workflow, but as Damian suggests, this is a good practice to start immediately from your “File…New Project” aspect and build up the workflow as you go along, rather than wait until the end to cobble everything together. For me, now I just need to add my specific NuGet deployment steps when I’m ready to do so.

Installing and feature wishes

If you find this helpful feel free to install this template from NuGet using:

dotnet new --install TimHeuer.GitHubActions.Templates

You can find the package at TimHeuer.GitHubActions.Templates which also has the link to the repo if you see awesome changes or horrible bugs. This is a simple item template so there are some limitations that I wish it would do automatically. Honestly I started out making a global tool that would solve some of these but it felt a bit overkill. For example:

- It adds the template from where you are executing. Actions need to be in the root of your repo, so you need to execute this in the root of your repo locally. Otherwise it is just going to add some folders in random places that won’t work.

- It won’t auto-detect the SDK you are using. Not horrible, but would be nice to say “oh, you are a .NET 5 app, then this is the SDK you need”

Both of these could be solved with more access to the project system and in a global tool, but again, they are minor in my eyes. Maybe I’ll get around to solving them, but selfishly I’m good for now!

I just wanted to share this little tool that has become helpful for me, hope it helps you a bit!